IWASS 2026 participants: Please find logistics information and updates below

Please contact us should you have any questions.

IWASS is free to attend, and no papers or presentations are required—our focus is on impactful discussions and insights. It will be held in person on June 13th and 14th, 2026 at the University of Minho in Braga, Portugal. The workshop will kick off at 12:30 p.m. on June 13th and finish at 6:00 p.m. on the 14th, ensuring we will have time packed with insightful discussions, right in time for the ESREL 2026 reception!

Confirmed Speakers

We are happy to share our list of fantastic confirmed speakers! Join us in Braga to hear from:

Silvia Vock, The Federal Institute for Occupational Safety and Health (Germany) (Linkedin)

Andrew Allamby, Zoox (LinkedIn)

Stephan Marwedel, Airbus (LinkedIn)

Thor Myklebust, SINTEF (The Foundation for Industrial and Technical Research) (LinkedIn)

Gesa Praetorius, Swedish National Road and Transport Research Institute (Linkedin)

Keynote Presentations

Silvia Vock, The Federal Institute for Occupational Safety and Health

“Safety-Critical AI in Industrial Applications: Between Regulatory Ambition and Open Research Questions”

As a trained materials scientist with a PhD in the field of magnetic imaging, she combines her scientific background with experience in applied research and development at Fraunhofer, including early work on machine learning in additive manufacturing. Since 2019, she has been working at the Federal Institute for Occupational Safety and Health and has led an AI research team since 2022. The team’s work focuses on machine learning in safety-critical industrial applications, with a specialization in robustness evaluation and robust training methods. Her team works with real-world datasets collected in collaboration with partners from industry, including construction machinery, automated guided vehicles (AGVs) in intralogistics, robotics applications, and machine tools. The work spans computer vision as well as time-series-based anomaly detection. The team collaborates closely with industrial partners, academic institutions, research institutes, certification bodies, and regulatory authorities

Abstract: Safety-critical applications of AI in industrial machines and autonomous systems are on the horizon. The regulatory frameworks meant to govern them are being finalized now. The EU AI Act and the new Machinery Regulation (EU) 2023/1230 are both in force but not yet fully applicable. Most high-risk AI provisions take effect in 2026, with AI embedded in regulated machinery products following in 2027. Meanwhile, the harmonized technical standards needed to operationalize conformity assessment for these systems are still under development and already running behind schedule. This creates the fundamental challenge that requirements must be defined before sufficient operational experience exists, and before the research community has established validated methods for specifying and testing AI properties such as robustness, behavioral consistency in open environments, or explainability. This talk reflects on this situation from the perspective of applied research and policy advice at a federal institute for occupational safety and health. It asks what formalizable and independently validatable requirements for safety-critical AI could realistically look like, where the open research questions lie, and what responsibilities fall to standardization bodies, researchers, and regulators.

Andrew Allamby, Zoox Inc.

“AV Operational Safety: Looking back to better predict the future”

Andrew Allamby currently works at Zoox Inc., as a Technical Lead Manager (TLM) in the Safety Strategy and Operations organization, where his team focuses on Reactive Operational Safety. In this role Andrew focuses on building operational safety processes, and optimizing safety frameworks for fleet scaling. Prior to joining Zoox, he started his work in the AV industry at Cruise Automation as a Strategic Operations lead, where he was responsible for large-scale, high-priority programs. In this role, he delivered key initiatives including high-speed enablement, validation tool building, and new market launches, while also managing the planning and execution for the Safety & Systems organization. Before transitioning to autonomous vehicles, Andrew spent over 15 years in biotech, specializing on OEM R&D for complex robotic systems as a Principal Systems Engineer at Roche Molecular Systems, and program manager Abbott Diabetes Care. Andrew has been in industry for over 20 years, holds a Bachelor of Science in Mechanical Engineering from Santa Clara University, and currenlty lives in San Francisco, California.

Abstract: Coming Soon!

Thor Myklebust, SINTEF Digital

“Who’s in Control: You or the car?”

Thor Myklebust Senior researcher at SINTEF Digital. His experience is in assessment and certification of products and systems since 1987. Has worked for the National Metrology Service, Aker Maritime, Nemko and SINTEF. Myklebust has participated in several international committees since 1988. Member of safety (NEK/IEC 65), the IEC 61508 maintenance committee “generic functional safety”, ISO/IEC TR 5469 & ISO/IEC 22440 “Artificial intelligence — Functional safety and AI systems”, stakeholder UL4600 autonomous products and railway (NEK/CENELEC/TC 9). He is co-author of three Springer books, The Agile Safety Case, SafeScrum, Proof of Compliance and The Agile Safety Plan. He has published more than 250 papers and reports. He is one of the founders of SafeScrum ® , The Agile Safety Case TM and The Agile Safety Plan TM .

Abstract: Modern vehicles increasingly combine artificial intelligence, driver assistance, connectivity, software updates, and partial automation. This changes not only how vehicles behave, but also how responsibility must be understood when something goes wrong. The central question is no longer only whether the driver made an error, but whether the vehicle, the automation, the software, the sensors, the human-machine interface, or the manufacturer’s data interpretation contributed to the event.

This presentation examines the relationship between AI-enabled vehicle functions, driver assistance, autonomy, and safety assurance. It uses the Tesla taxi crash at Torgallmenningen in Bergen as a central case. In that incident, the driver claimed uncontrolled acceleration and braking attempts, while vehicle data reportedly indicated full accelerator input. Later reporting described missing six seconds of data and a missing network card from the vehicle’s main computer. The case illustrates a key forensic problem in software-defined vehicles: traditional Event Data Recorder data may show what the vehicle recorded, but not necessarily why the vehicle behaved as it did, whether the recorded signal was complete, or how driver and system responsibility should be allocated. The presentation argues that future investigations need a broader evidentiary basis, combining EDR (Event Data Recorder) data, DSSAD (Data Storage Systems for Automated Driving) data, OEM (Original Equipment Manufacturer, e.g. Tesla) operational data, diagnostics, software and configuration history, safety-case documentation, and independent technical assessment. Current and emerging regulations, including UNECE work on automated driving systems, driver control assistance systems, in-service monitoring, and safety cases, may provide a more structured framework for determining control, causation, and accountability. The broader safety challenge is to move from fragmented post-crash evidence toward continuous safety learning. When automation fails, responsibility should be assessed through transparent data access, robust forensic methods, defensible safety cases, and regulatory frameworks that can address both human error and system-level failure.

Stephan Marwedel, Technical Expert at Airbus

“Safety and security vistas: Looking at dependability and resilience from a room with two windows”

Stephan Marwedel works as technical expert in the aircraft security group at Airbus Commercial Aircraft. In his role, he is concerned with ensuring that aircraft systems are resilient against cyberattacks which might result in unsafe conditions. Safety is the core of his engineering work and he is actively engaged in promoting a joint vision on safety and security on which the high standards of the aviation industry are based. To that end, he is a member of several international standardization committees, such as EUROCAE (European Organization for Civil Aviation Equipment) WG-72 on Information Security in Aviation, WG-114 on Artificial Intelligence in Aviation and ARINC (Aeronautical Inc.) NICS (Network Infrastructure, Cybersecurity and Security) and SAE S-18 (Aircraft and System Development and Safety Assessment Committee).

He is the chair of the security subgroup of the joint EUROCAE WG-114 / SAE G34 committee on information security for AI-augmented airborne systems. In his work, he performs security risk assessments for airborne systems, designs appropriate security measures to achieve the protection objectives, defines security test objectives and supports security tests. Furthermore, he provides evidence supporting the required initial and continuing airworthiness activities in cooperation with the airworthiness authorities. Additionally, he is working in a variety of research and pre-development projects aiming to improve security design, implementation and operation of airborne systems along the life cycle for application in existing and future aircraft programs.

Abstract: Any engineering system that exhibits failure conditions with catastrophic or hazardous impacts is subject to stringent assurance activities covering both the initial design, development and test phases as well as the operational and maintenance phases. In such systems, safety is one of the most important contributors of design constraints, as unsafe states of the system are not acceptable and have to be avoided by all means. However, safety has a specific view, (= vista), on a system, i.e. only random technical failures of system components contributing to hazardous failure ocnditions are considered in a safety assessment. The outcome of the safety assessment is an important source of additional requirements of the system under design. Validatation of all system requirements and verification of their implementation together with additional assurance activities result in certification of the system. Users trust that the certified system can be regarded as sufficiently safe for daily operation. In contrast to safety, security has a different vista. It looks at human actors trying to deliberately compromise a system by unauthorized electronic interactions with the intent to cause loss of integrity or availability, ultimately resulting in failure conditions which are potentially harmful to the users of a system or to its environment. A security risk assessment is performed to identify the major risks and to define adquate protections to prevent successful attacks. Evidence is then collected in from of requirements validation and security refutation tests to demonstrate the effectiveness of the protections which forms the basis for the security certification of the system support trust on behalf of the users that the system is adequately protected against attacks. Modern systems include an increasing number of communication, connectivity and dat processing functions that were not present in previous designs. Apart from the additional intented functionality, these functions do contribute to a much enlarged attack surface leading to increased effort for security architecture design and certification. This is exacerbated even more when considering autonomous systems, as human operators are not available as a fallback safety measure. Such systems typically include functions which were not safety-relevant in conventional systems do become relevant for safety, e.g. digital communication links or AI-enabled processing functions. In this talk, we present a third vista, i.e. a joint view on modern systems design and development trying to reconcile the different viewpoints of the safety and the security vistas with the aim to produce a single coherent set of system requirements that will cover both the safety and the security perspectives. We will discuss the necessary trade-offs between safety and security that may need to be made in modern system designs in order to justify trust in both the safety and the security properties of a system.

Gesa Praetorius, Swedish National Research Transport Institute (VTI)

“Designing for joint work in maritime operations”

Dr. Gesa Praetorius is a Human Factors expert with a background in Cognitive Science and a PhD in Shipping Technology (Maritime Human Factors) from Chalmers University of Technology (Sweden). She currently works as Senior Researcher at the Swedish National Research Transport Institute (VTI) and holds adjunct positions at Linköping University (Sweden) and the Western Norway University of Applied Sciences (Norway). In her research she applies concepts from Cognitive Systems Engineering and Resilience Engineering to explore the interplay between humans, technology and organizational settings in complex safety-critical systems. Praetorius’ current research focuses on the design of joint human-automation systems for resilient performance.

Abstract: Maritime transport is considered the backbone of the global economy and more than 80% of all goods are transported by the international merchant fleet. While the maritime domain is often considered to be very traditional, recent technological development has led to a rapid increase in automation and autonomy. This development raises a need to rethink the way in which we design work processes across humans and technology. This will be the starting point for my talk, in which I would like to discuss how we can approach design for joint activities in human-machine systems in safety-critical domains. The talk will address how maritime operators imagine joint work and what they would value in collaborating with advanced automation. I will provide examples of how we can approach the design of joint systems and activities, as well as the lessons that we have learnt along the way.

IWASS Location & Accommodation Information

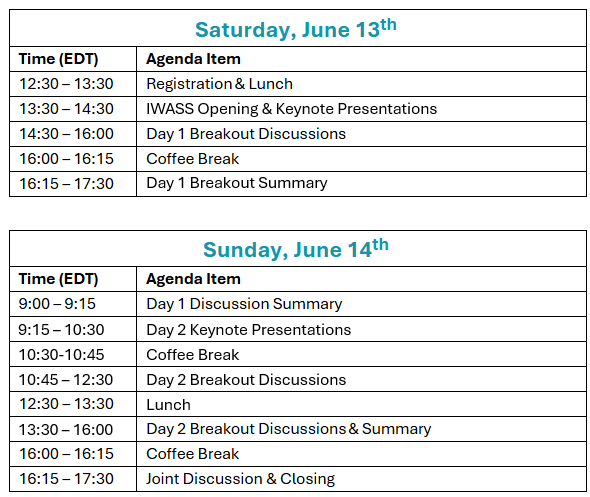

IWASS Tentative Program

As in previous years, IWASS focuses on discussing challenges and proposing solutions concerning autonomous systems' safety, reliability, and security. After a short opening session, the participants will be split into breakout discussion groups with dedicated topics.

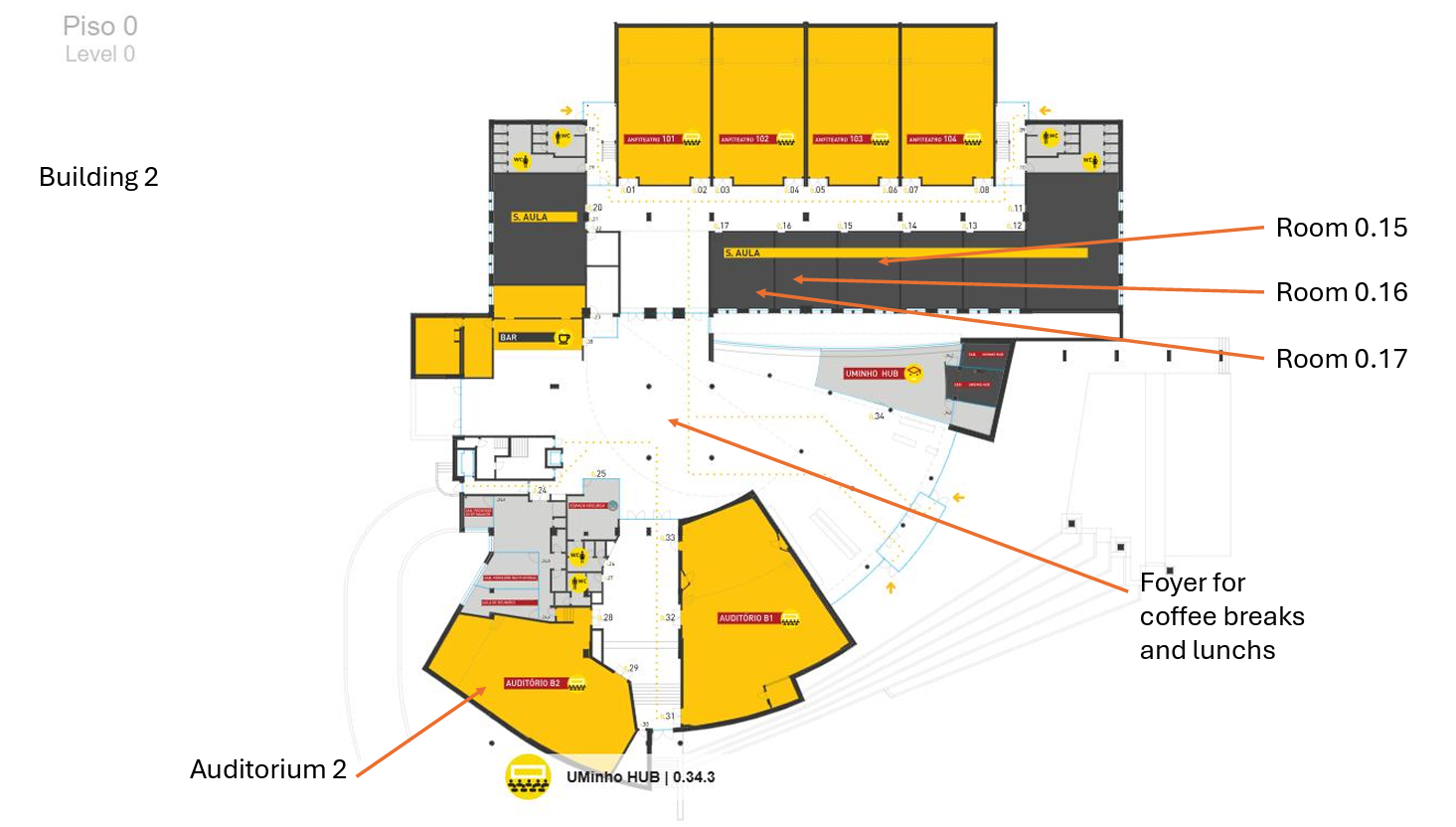

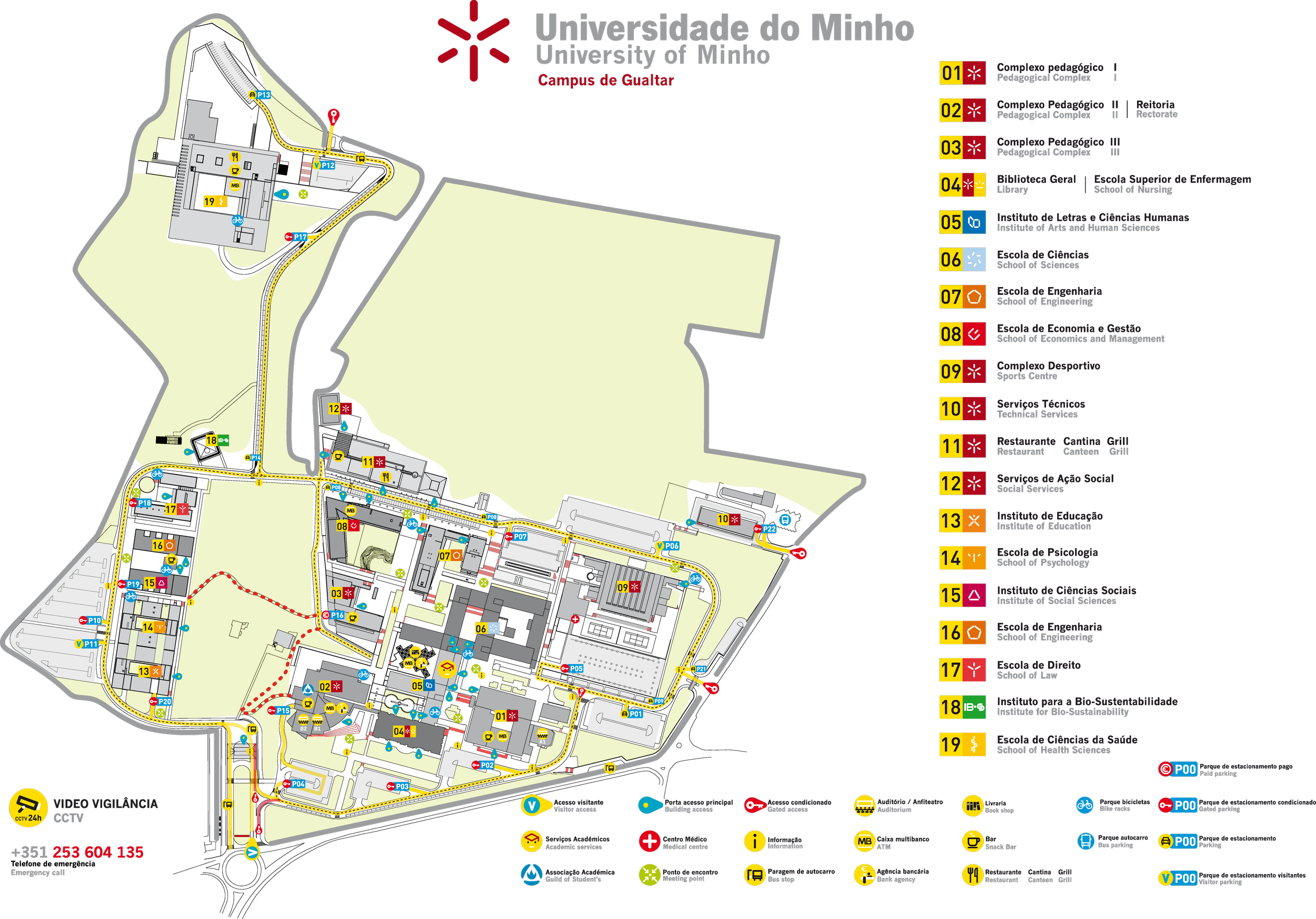

Where: Universidade do Minho, Campus de Gualtar, Building 2; 4710-057 Braga, Portugal

FInd more information at: https://www.uminho.pt/EN/student-life/campi/Pages/Maps.aspx